AI

How We Build AI Agents That Credit Unions Can Actually Trust

Rahul Shah

Founding Product Manager

Every AI vendor pitching credit unions and community banks sound the same. “Agentic”. “AI-powered”. “Conversational”. “Intelligent”. Underneath the marketing buzzwords, very few of these vendors can articulate a real strategy on how their agents should actually behave.

The “how” matters. AI in financial services is a trust product, not a feature product. The AI agents getting institutions from Stage 3 of AI adoption (a working use case) to Stage 4 (AI embedded in real workflows) are not the ones with the longest feature lists. They are the ones that are carefully orchestrated, thoughtfully integrated and consistently performant.

Here are the 5 principles we built our AI Document Collection Agent on. They are how we think every AI agent inside a credit union should be built.

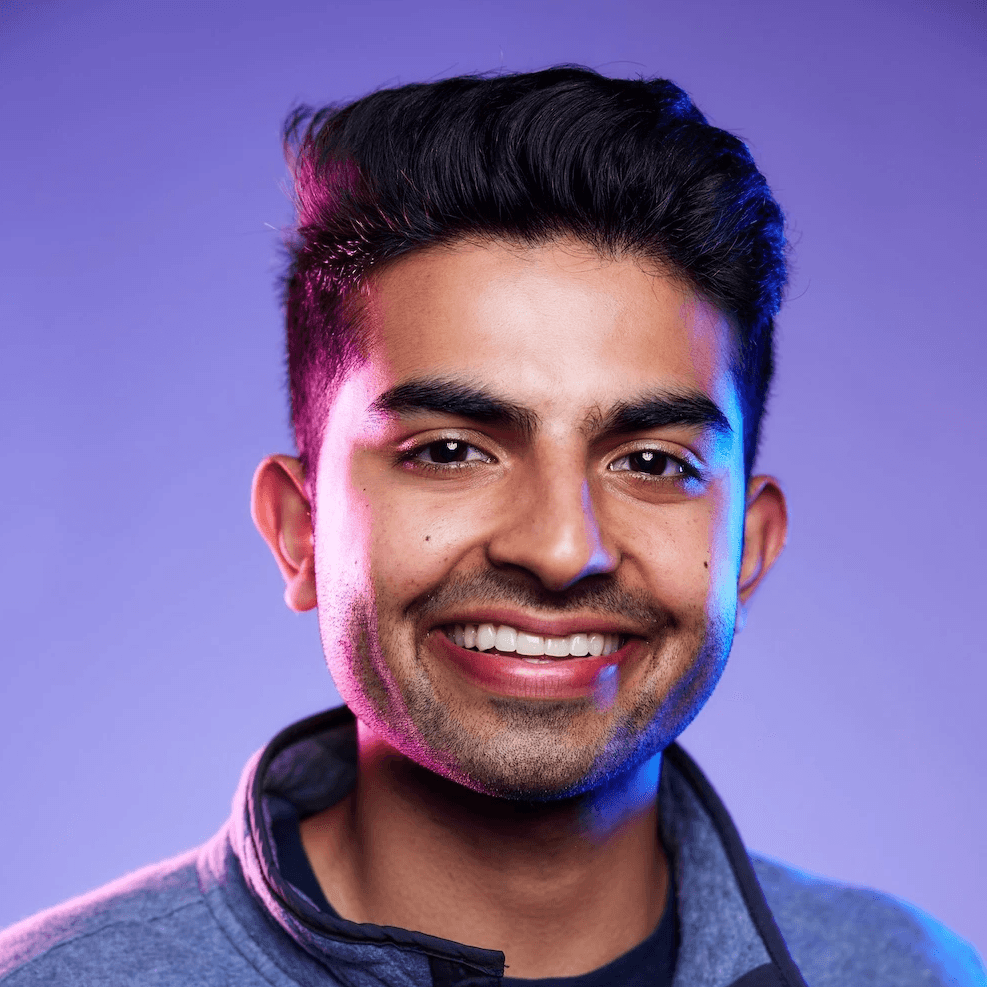

1. Maximal Transparency Into What the Agent Does

Every action our agent takes is visible in the customer dashboard. Every outbound call, every email, every document, every conversation summary, every escalation. A loan officer can scroll back through a member's file and see exactly when an agent made contact, what was asked for and the outcome of that conversation.

This is not a nice-to-have. As regulated, member facing institutions, the version of AI that earns credit union trust is the one that shows its work. AI without an audit trail is a regulatory problem waiting to happen, and member trust is too hard-won to risk on a black box.

2. Don't Plug In AI for the Sake of AI

We apply the same test internally to every part of our agent: Does AI make this materially better for the credit union or the member, or are we just checking a box?

If the answer is the latter, we use a traditional system. AI enhances our traditional systems, it does not replace them. Most of the agent's surface area looks like AI to a member because it speaks naturally and answers their questions. But under that surface, the parts that should be deterministic stay deterministic.

AI is excellent at open-ended language understanding and at classifying messy real-world inputs. Its ability to be creative, consider many options at once and natively understand real-world edge cases allows AI to shine in this regard. However, it is wasteful and risky for predictable, repeatable tasks where something like a rule engine is faster, cheaper, and more reliable. You don’t want an agent to be “creative” when a user asks “what is the status of my loan?”

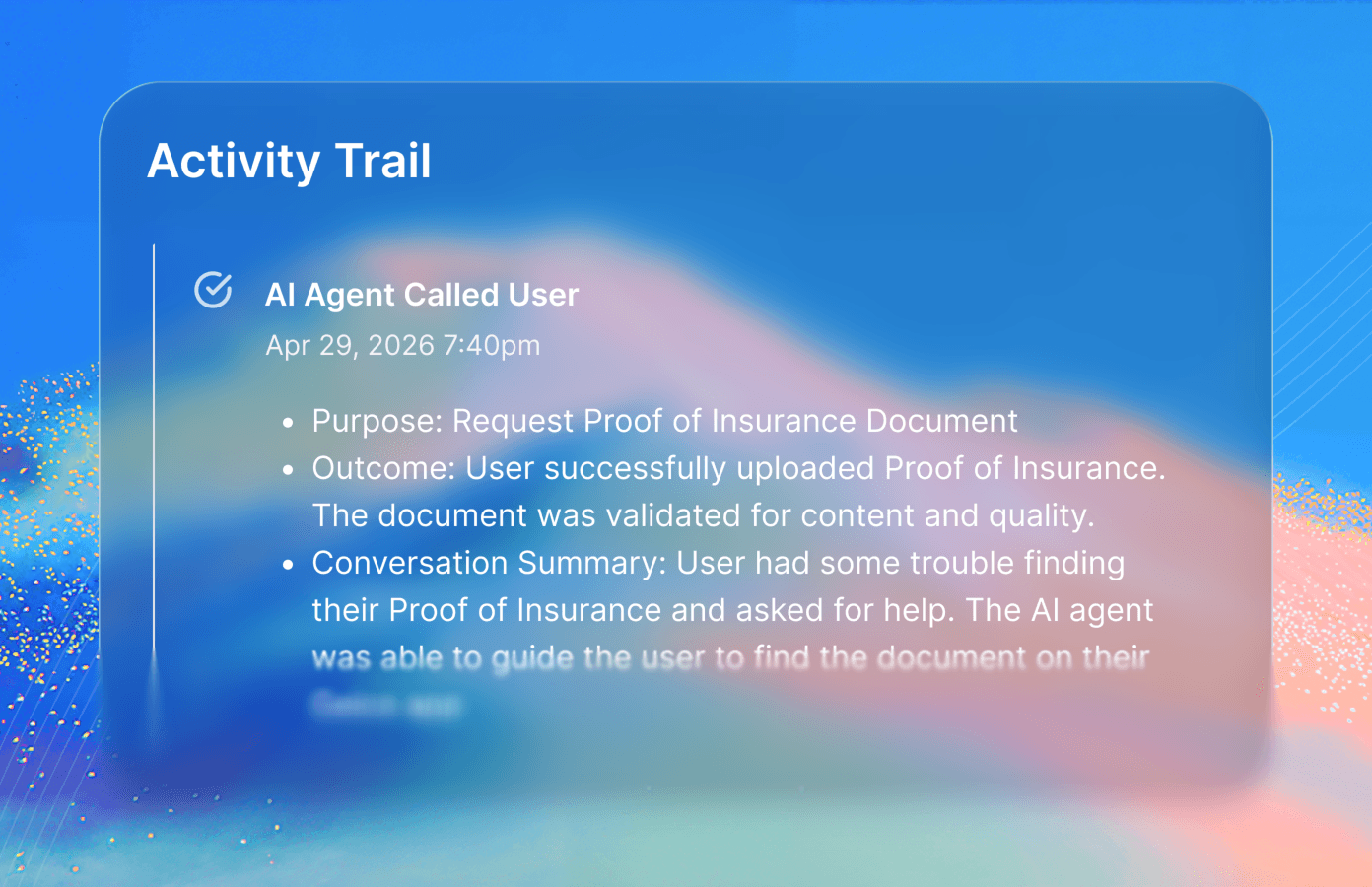

Let’s take document collection for example. We know exactly what documents are needed, and the requirements for each document type. We don’t need an AI agent to write the request email from scratch every time. Instead, we use a standardized template and allow the agent to fill in open-ended details within the template such as contextual information from prior conversations.

3. Think Beyond Words

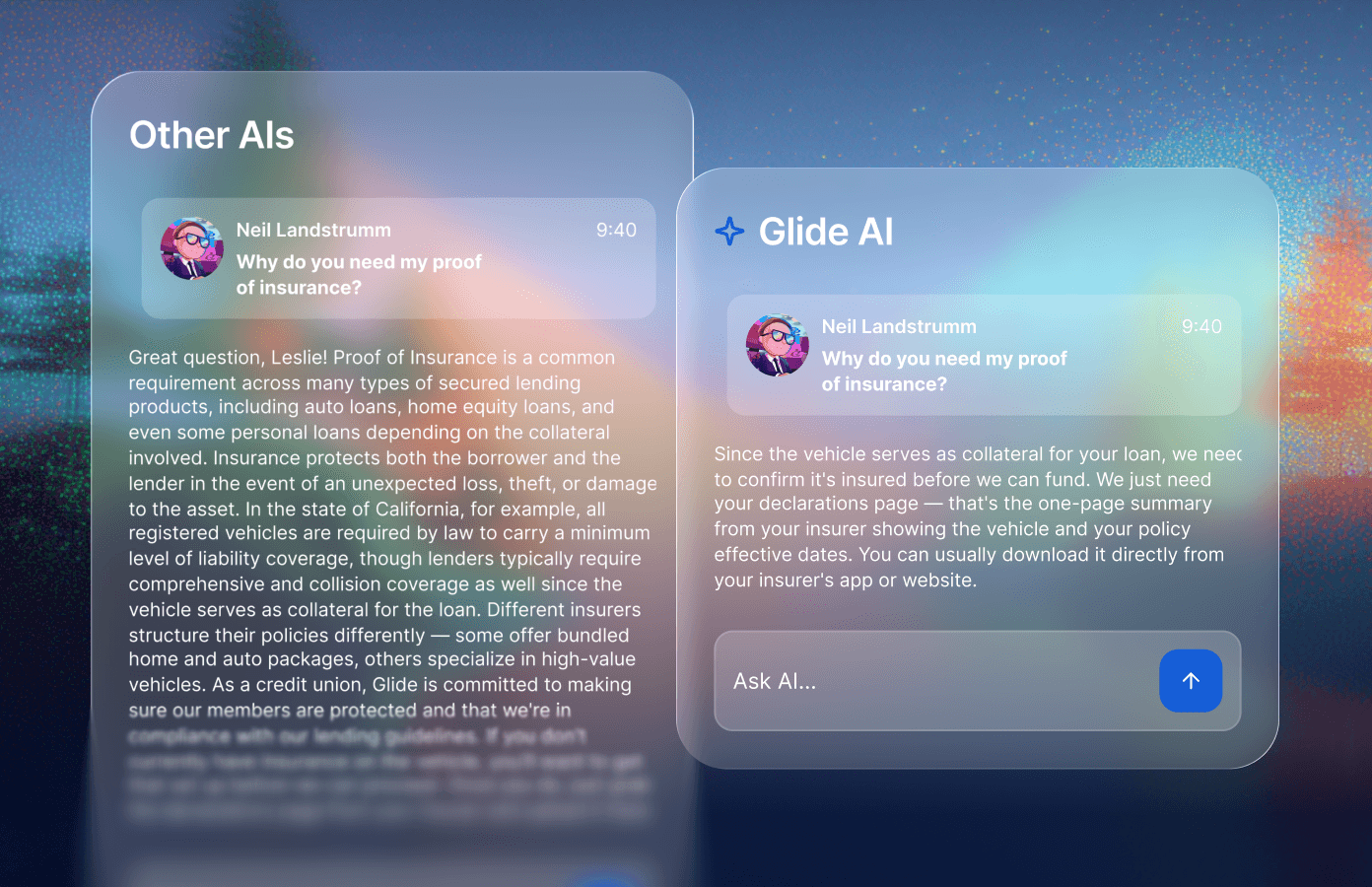

Conversational AI has trained the industry to default to text and voice for every interaction. But language is often the slowest, least precise way to communicate something. The best agents know when to stop talking and start showing.

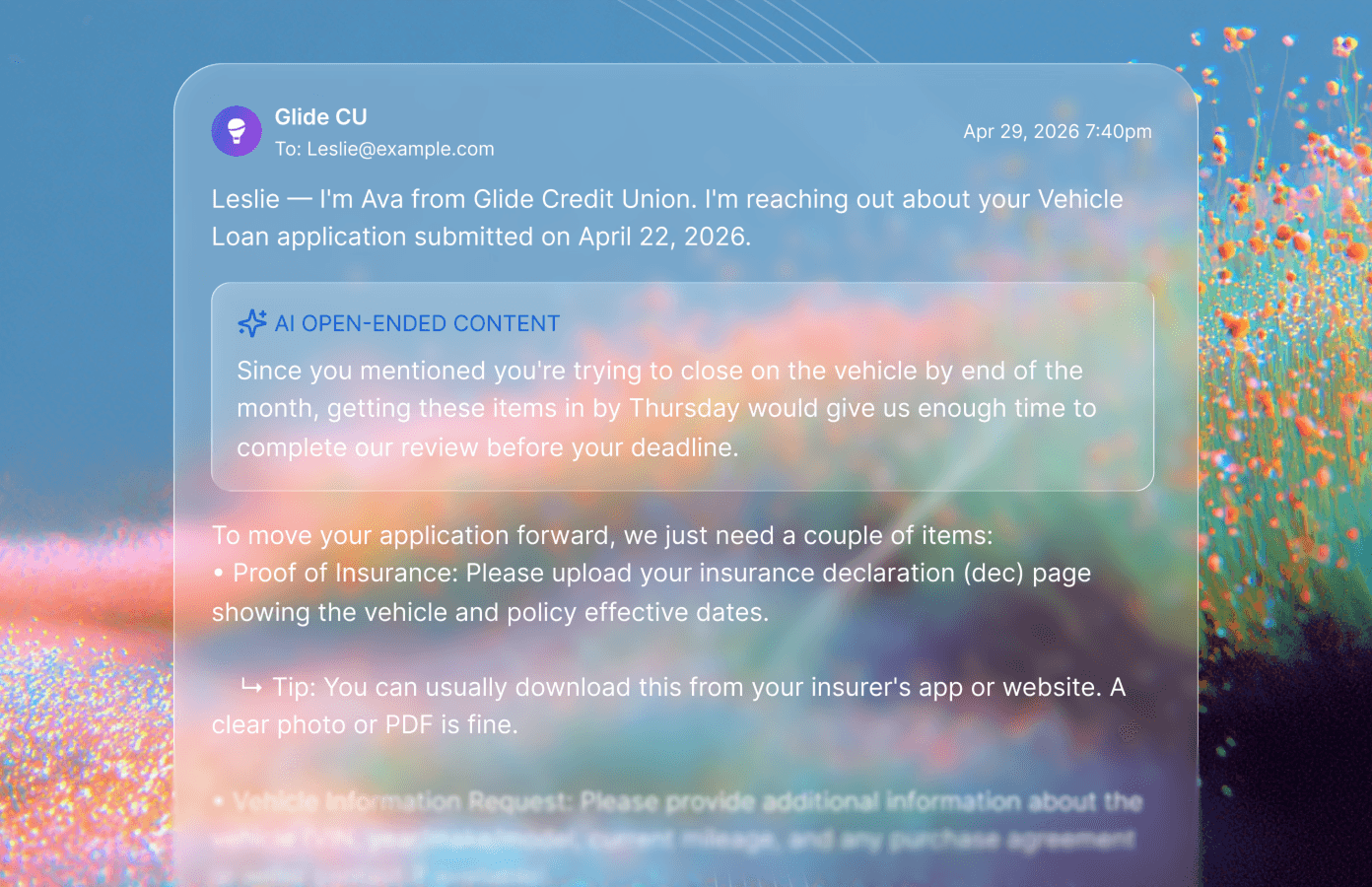

Take a question every loan officer hears constantly: "What's the status of my application?" The honest answer is rarely a sentence. The wrong way to handle this is to write: "Your application is currently in underwriting review pending receipt of two outstanding documents. Once those are received, your file will move into final review, which typically takes 2-3 business days before you receive a clear-to-close." The member finishes that paragraph with vague reassurance and no real understanding of where they are or whether they should be worried.

The right way is to show them. Render a progress tracker with their current step highlighted, completed steps checked, and the two outstanding documents listed right under the active step with upload buttons next to each. The member sees instantly where they are, what's holding things up, and what they personally can do about it. The status anxiety disappears, and so does the follow-up phone call.

The same principle applies across the workflow. Instead of describing which documents are still missing, show a checklist with green checks and empty boxes. Instead of explaining why an income calculation came out a certain way, show the math. Instead of telling a member their application is "in underwriting review," show them where they are in the process and what comes next.

This is not about replacing conversation. Voice and text are still the right interface for many cases. But when the answer is something a member can see in a second, making them read a paragraph is a failure of design. A good agent picks the right medium for the moment.

4. Fail Gracefully

Members should not have to work around AI limits. The burden of edge cases belongs to the agent, not the member. This shows up in three places.

First, channel switching. If a member cannot take a call, the agent automatically follows up by email or SMS, and the conversation continues with full context. The member experiences one agent across channels, not three disconnected outreaches from three disconnected systems.

Second is the unknown. If the member asks the agent something it does not know, or asks to speak with a person, the agent hands-off to a staff member with a brief summary of what has happened so far. It does not invent an answer.

Third is recovery. If a member hangs up mid-call and uploads their document an hour later, the email agent picks up where the voice agent left off. From the member's perspective, the conversation never broke.

Graceful failure is what separates an agent that works in a demo from an agent that survives contact with real members on real days.

5. Tightly Managed Context

There is a temptation, especially as model context windows have grown, to give the agent everything. The member's full history. Every document on file. The credit union's entire policy manual. We have found the opposite to be true. Bigger context is not better context.

The agent gets exactly what it needs to do the job in front of it: The loan details, the member's name, the document being requested, the tips for finding that document, the FAQs likely to come up. That is it.

Out of scope is anything that does not directly serve the task at hand. This keeps responses tight and on-topic, reduces the surface area for hallucination, and minimizes sensitive data exposure. It also makes the agent easier to evaluate because we know exactly what it knew when it made each decision.

If there is any information that could come in handy, but not for the majority of cases, we put it behind a tool call. The agent can pull the information when needed, and is not unnecessarily encumbered by it from the get go.

This is partly a quality argument, partly a safety argument, and partly a humility argument. Each agent needs to be very good at one job, not be a generalist.

What This Adds Up To

A truly effective and dependable AI agent is the result of consistent and deliberate design choices made by a team that understands where AI can shine and, just as importantly, where it cannot.

These five principles — transparency, intentional use of AI, communicating beyond words, failing gracefully, and tightly managed context — are the direction we have chosen for our AI Document Collection Agent, and the lens we apply to every agent we build at Glide. They are how we make sure the AI you put in front of your members is one you'd be comfortable putting your name on.

For the credit unions already building with us, this is the standard you should expect from every agent we ship. If something we release ever falls short of one of these principles, we want to hear about it. That feedback is how the product gets better.

For institutions thinking about where AI fits in your roadmap, we'd encourage you to use these principles as a checklist for any agent you evaluate, including ours:

Can your team see exactly what the agent did, when, and why?

Is AI being used where it actually adds value, or just where it's easy to market?

When the agent doesn't know something, what happens next?

What context does the agent have access to — and what is deliberately kept out?

How does the agent recover when a member switches channels, drops off, or comes back days later?

These are the questions we ask ourselves before anything ships, and they are the questions worth asking of any AI in a member-facing role.

If you'd like to see how our Document Collection Agent puts these principles into practice, reach out to your Glide team or book a walkthrough. And if you're earlier in your AI journey, our Practical Guide to AI for Community Banks and Credit Unions is a good place to start.